Device A

Software Results

Device A used a somewhat unconventional approach for session management. The API could be talked to with a fixed Authorization Token for both independent accounts – the only unique secret necessary is the “devicetoken”, which is static for the tracker device and has a lifetime that does not expire. We were able to query data over half a year later with the same parameters – no new session creation was necessary.

Another interesting thing we noticed was that the device uses server infrastructure with domain names related to bike tracking – so it seems that the manufacturer repurposes this environment for pet tracking, just with another front-end.

To assess the risk of token prediction, the mathematical randomness of the tokens was analyzed. The tokens in Device A had sufficient entropy to prevent brute-force prediction attacks, effectively mitigating the risk of session hijacking via token guessing. However, should an attacker obtain the device token in another way, access is possible forever.

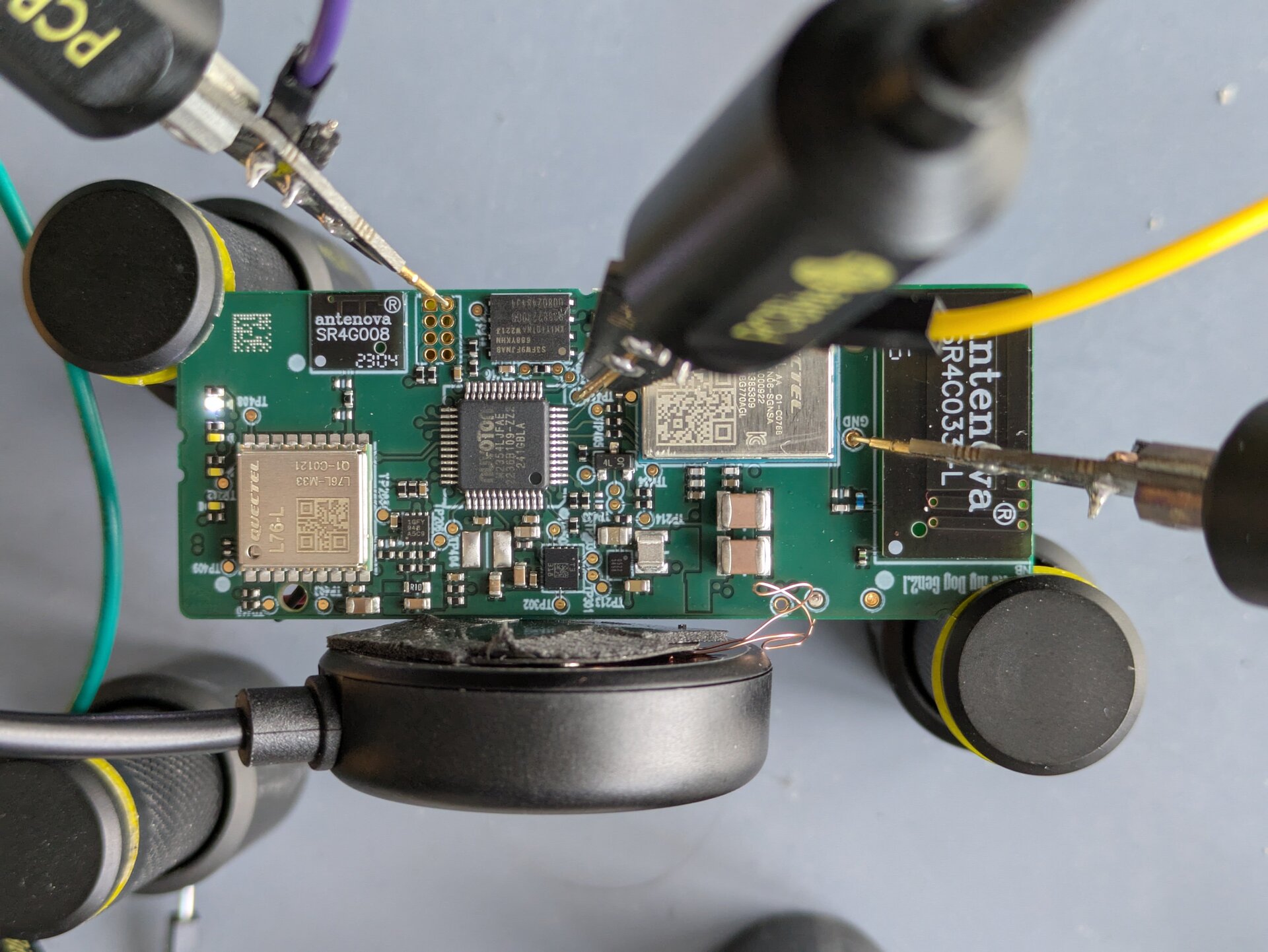

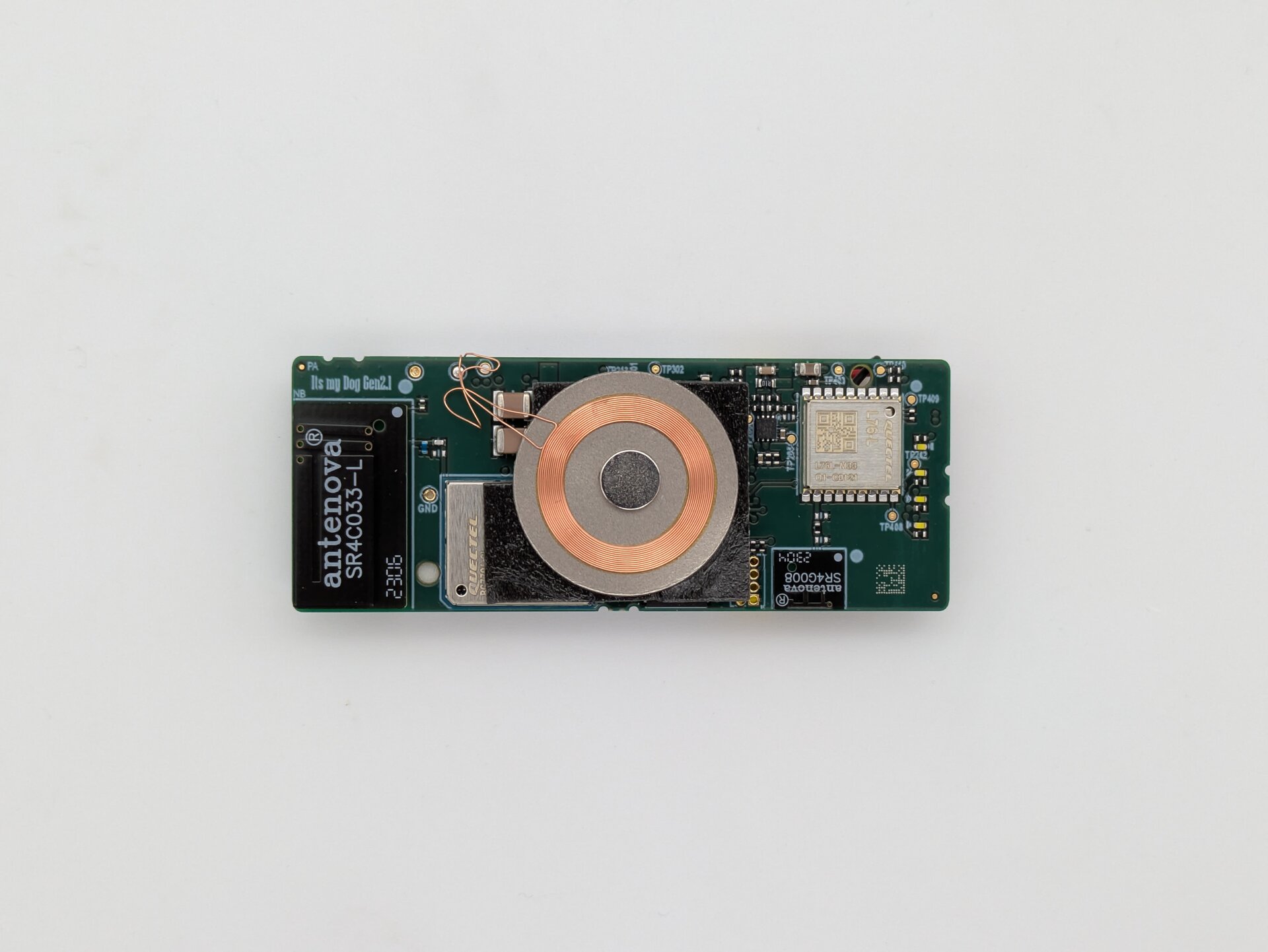

Hardware Results

The device is built upon a Quectel BG770A-GL LTE modem and a Nuvoton M2354LJFAE microcontroller. A debug footprint was successfully identified for the Nuvoton microcontroller, and we performed a memory readout via the unlocked Serial Wire Debug (SWD) interface. As the analysis of bare-metal firmware is time consuming, no in-depth analysis could be executed. A quick initial analysis showed no interesting strings. Additionally, we successfully captured UART communication between the microcontroller and the modem and of the modem itself. The communication traffic consisted of standard AT commands such as QISEND and QIRD. The findings suggest a binary protocol for server communication, which again would need much more effort for further analysis.

Figure 1: Device A, a Quectel modem and Nuvoton microcontroller

Device B

Software Results

Device B used JSON Web Tokens (JWT) for session authorization, which is dependent on the integrity of the signing process and correct server-side validation.

We tested for common JWT implementation flaws, such as the "none" algorithm attack and the use of weak signing secrets. We found the implementation to be secure, with the backend correctly rejecting tokens with modified headers or signatures. Furthermore, the authorization checks for Device B covered the entire user lifecycle:

- Tracker Registration: The process of claiming a new tracker was verified to require a unique, non-predictable identifier, preventing "tracker squatting" where an attacker could claim a device before the legitimate owner.

- History Access: The API correctly validated that historical location data could only be retrieved by the authenticated owner of the specific tracker.

- Billing Security: Access to invoices was strictly restricted. An attempt to access an invoice using a different account’s token did not work.

Additionally, the password reset flow was analyzed to identify classic account takeover vulnerabilities. This did not yield any results.

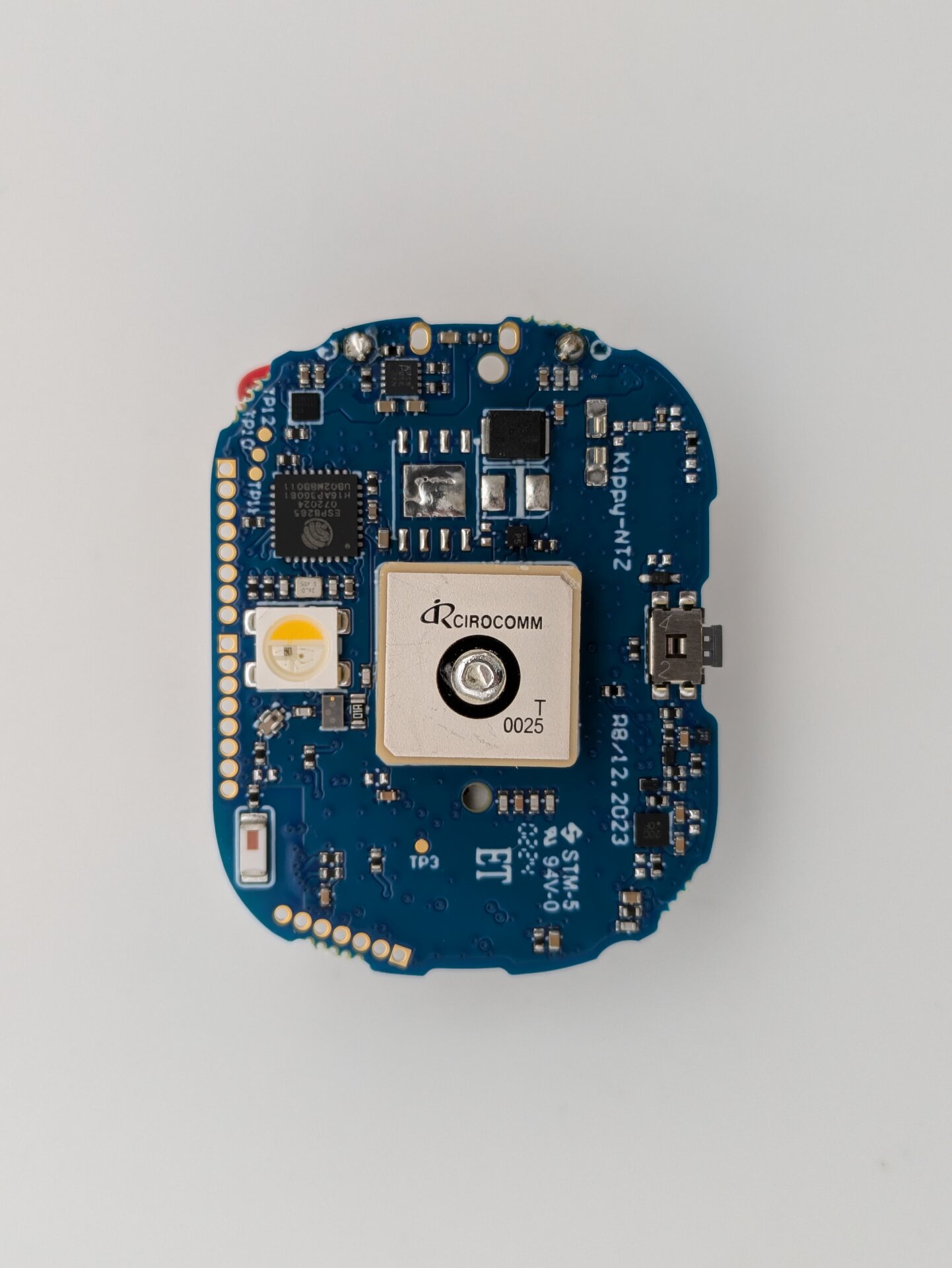

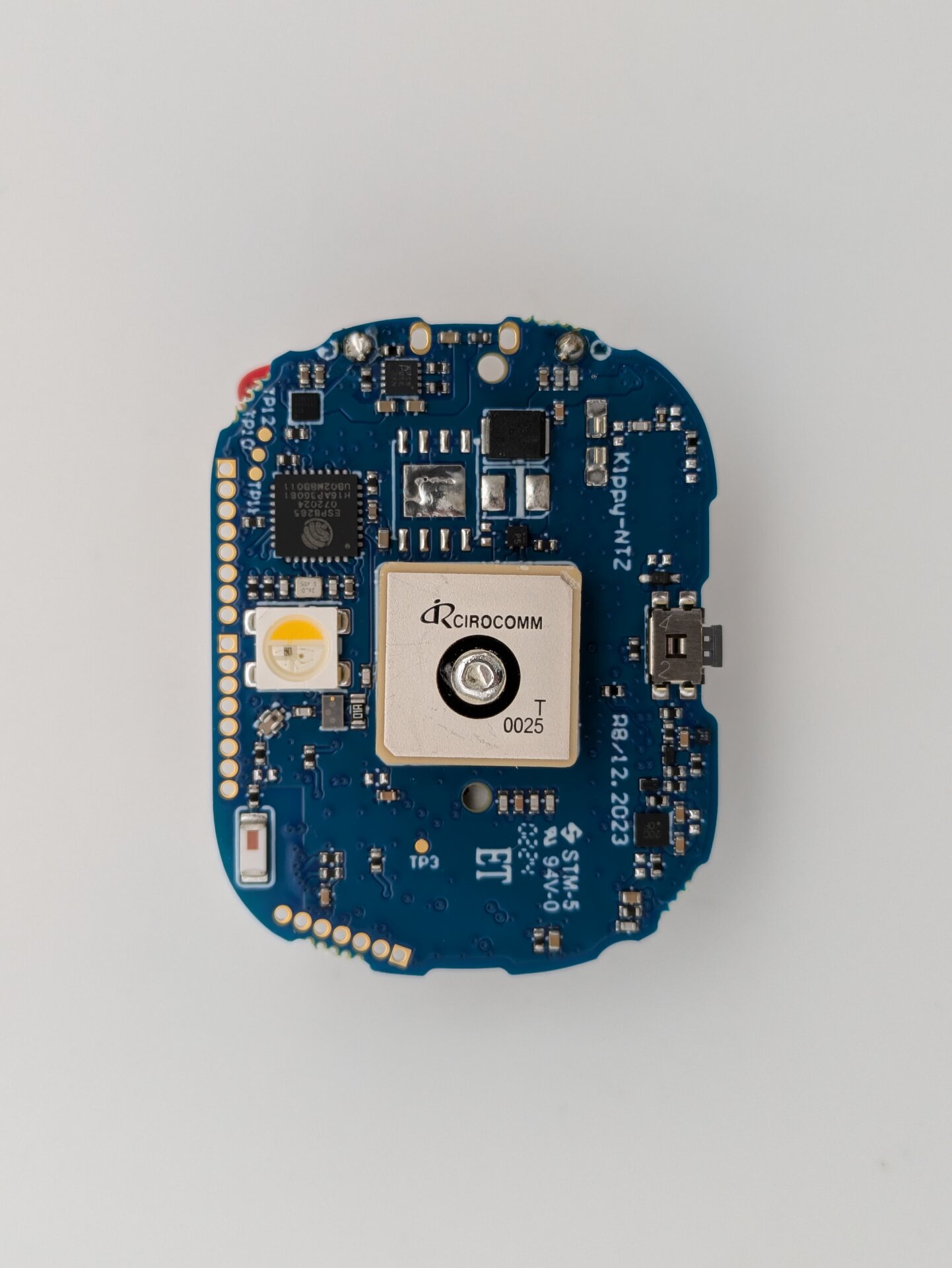

Hardware Results

Device B is based on an ESP8285 and an STC STC8G2K64S4 microcontroller. Unlike Device A, although some footprints likely used for development were identified, no access to the device could be achieved. Additionally, we were unable to identify internal communication between different parts.

Figure 2: Device B, ESP8285 module and STC microcontroller

Device C

Software Results

Device C was similar to device B, as it also used JWT for session management.

The assessment of device C also prioritized authorization, checking whether one user could trigger commands on another user’s device. This is an interesting area for pet tech, as the ability to remotely trigger sound or change names could be used for harassment. The results confirmed that the backend validated the ownership relationship for every command execution.

Besides that, we also checked authorization for location history, invoices, order PDFs, and tracker status. No issue was identified regarding these APIs.

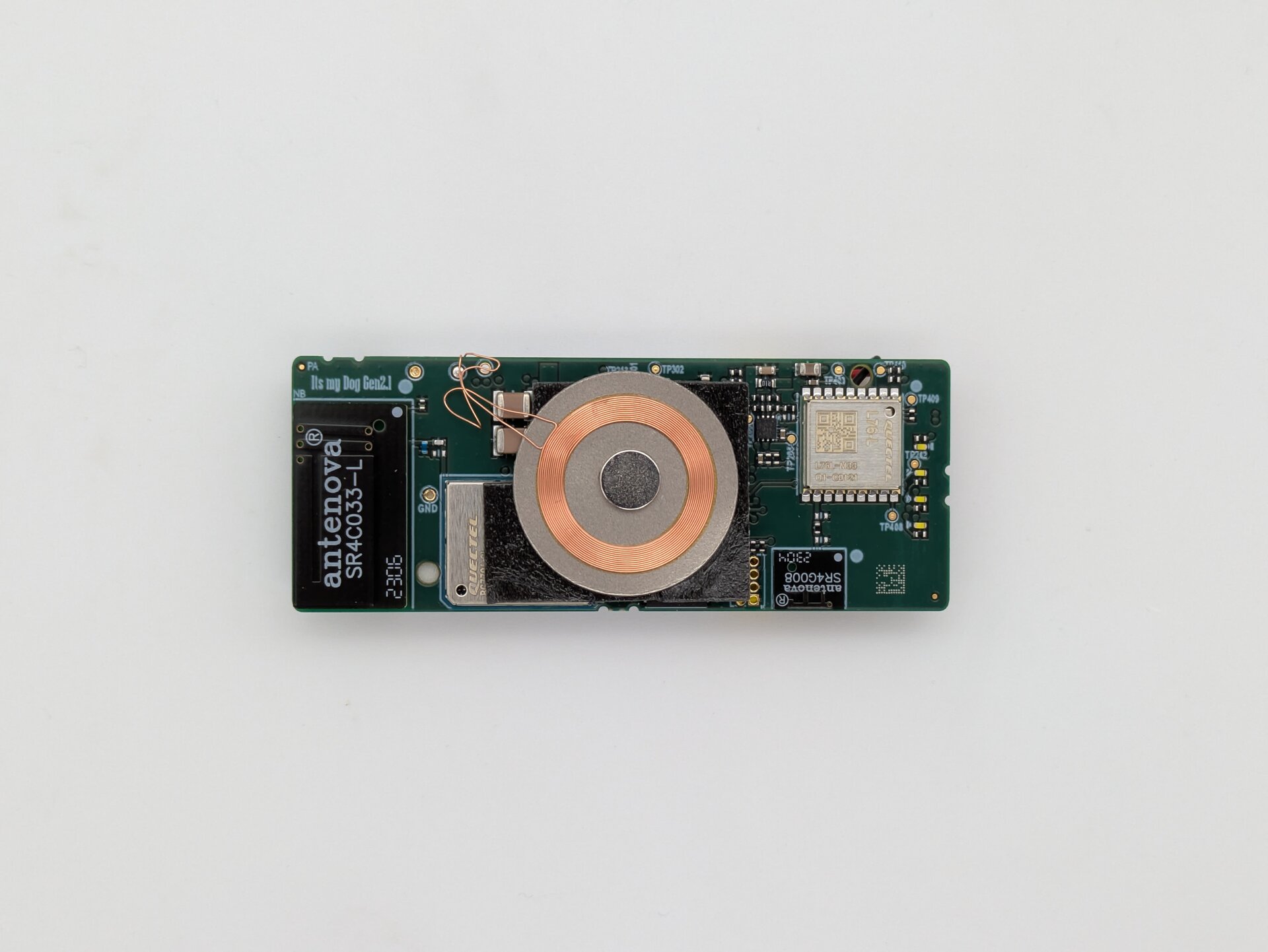

Hardware Results

Built on a Simcom A7670G modem – SEC Consult already has experience with that manufacturer – Device C proved to be the most monolithic one. We identified a system UART interface that yielded a boot log. Additionally, another UART was identified that is dedicated to communicating in the GNSS NMEA format to read location data from the GPS module in real-time. We were also able to activate the downloading mode to program the device by shorting the UBOOT pin to GND. Using this mode, further exploitation might be feasible but needs more time.

Figure 3: Device C, built on a Simcom modem

Device D

Software Results

Device D included a feature for uploading pet images, which we looked at more thoroughly. The authorization checks were performed for all critical features – no vulnerabilities were identified here either. We also assessed the password reset flow – as with the other devices – no issues were found here.

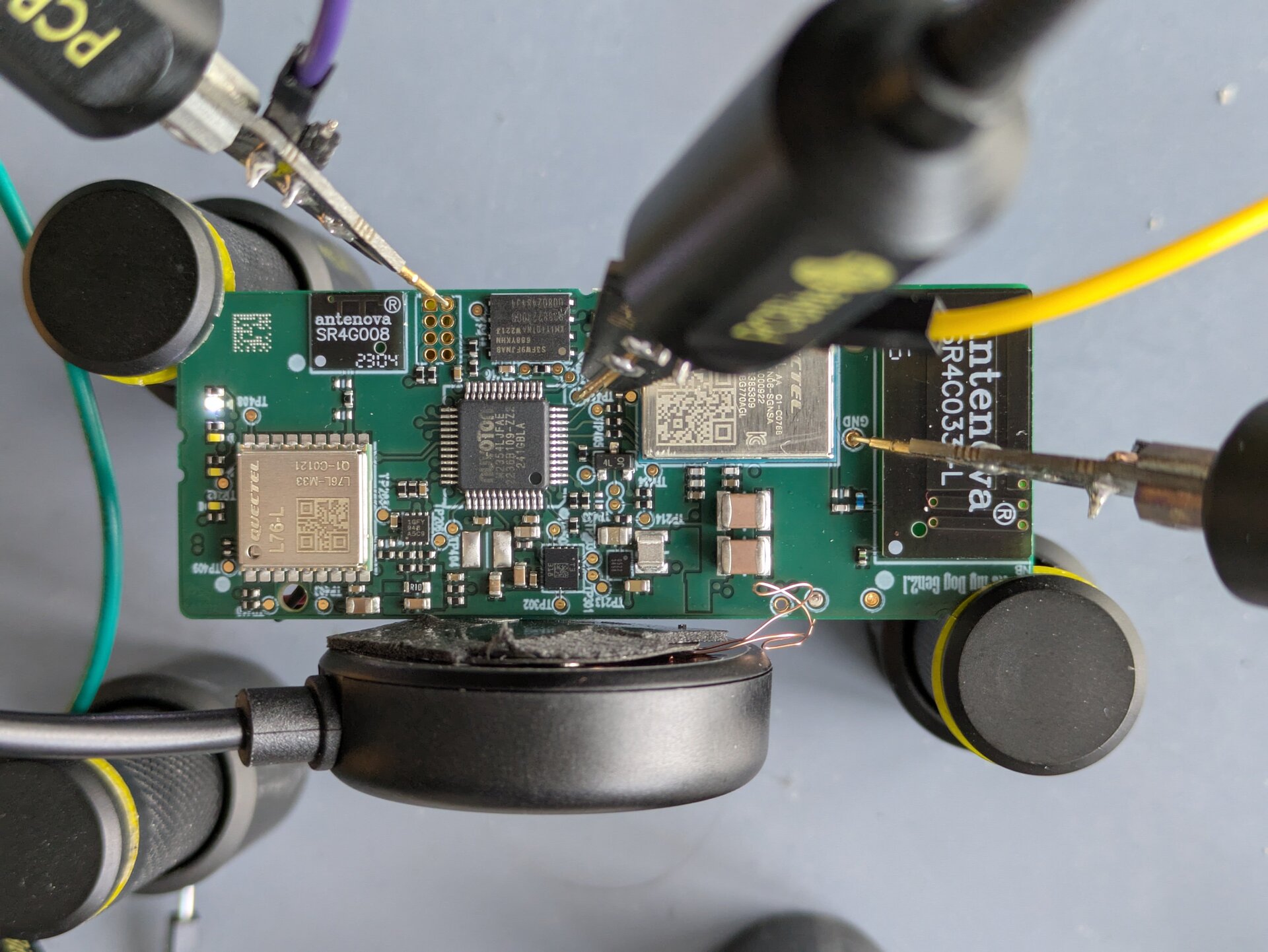

Hardware Results

This device again uses a multi-chip architecture with an ESP8285 and an nRF52832 microcontroller. Debug footprints were identified for both microcontrollers. We successfully connected to the ESP8285 UART (providing a boot log) and read out the memory using esptool. Similar to Device A, a quick analysis of the dumped memory did not immediately reveal interesting plain-text strings. There was not enough time for a comprehensive analysis of the firmware that is again time consuming due to its bare-metal nature.

Figure 4: ESP8285 module and nRF52832 microcontroller

Conclusion

The results of this analysis suggest that while technical security controls are becoming more standard, the privacy architecture of the pet tracking industry remains problematic.

The hardware assessment across all four trackers revealed no standard architecture but complex systems made from multiple microcontrollers and communication modules. This goes as far as two main processing units, a GPS module, and an LTE module with eSIM, all of them running some variant of RTOS or bare-metal firmware. Therefore, designs are more complex than typical IoT devices that are often based on a single SoC running a standard embedded Linux. Whoever is interested in breaking such devices with physical access should dedicate multiple days to that task.

As billions of devices become co-located with our daily lives, the boundary between animal and human data will continue to blur. Independent security research is an important mechanism capable of providing the transparency required to protect consumers in this new reality.

The methodology demonstrated in this blog post proves that it is possible to conduct high-impact security research without "crossing the line" into unethical activity. The fact that the assessed devices were not found exploitable in this instance shows the effectiveness of current baseline standards. Although we could not break these devices "in a day", in-depth assessments of such devices with enough resources (or even better including whitebox methods like source code reviews) are highly advised to create a robust and secure base. This is even more important as by December 2027 the CRA (Cyber Resilience Act) will enter into force, demanding cybersecurity requirements for all products sold within the EU market. Moreover, the inherent privacy risks of constant location tracking remain a fundamental issue that technical security alone cannot solve and must be supported by organizational measures.

We hope this kind of work encourages other researchers to approach consumer IoT the same way – by operating with transparency, respecting the privacy of third parties, and focusing on systemic logic flaws rather than destructive exploits, researchers can ensure that the "peace of mind" marketed by pet tech manufacturers is built on a foundation of genuine security and respected privacy.

This blog post was produced by the technical research team consisting of Gerhard Hechenberger and Bernhard Gründling at SEC Consult Vulnerability Lab, with project coordination by Adriane Würfl. Special thanks go to Thea, whose daily walks provided the real-world GPS tracking data that made this research possible. The results reflect the state of the devices as of the date of analysis.